AI in Financial Services Has a Data Problem. Is Context Enough Or Do We Need More

Everyone is talking about context. Very few people have actually built for it.

Part 2 of 3 — Kurrent Thought Leadership Series

Key Takeaways — Short Version

- AI is in every conversation — and the foundation underneath is the next frontier. Financial institutions are moving. The problem isn’t adoption. It’s what most of them are building on top of.

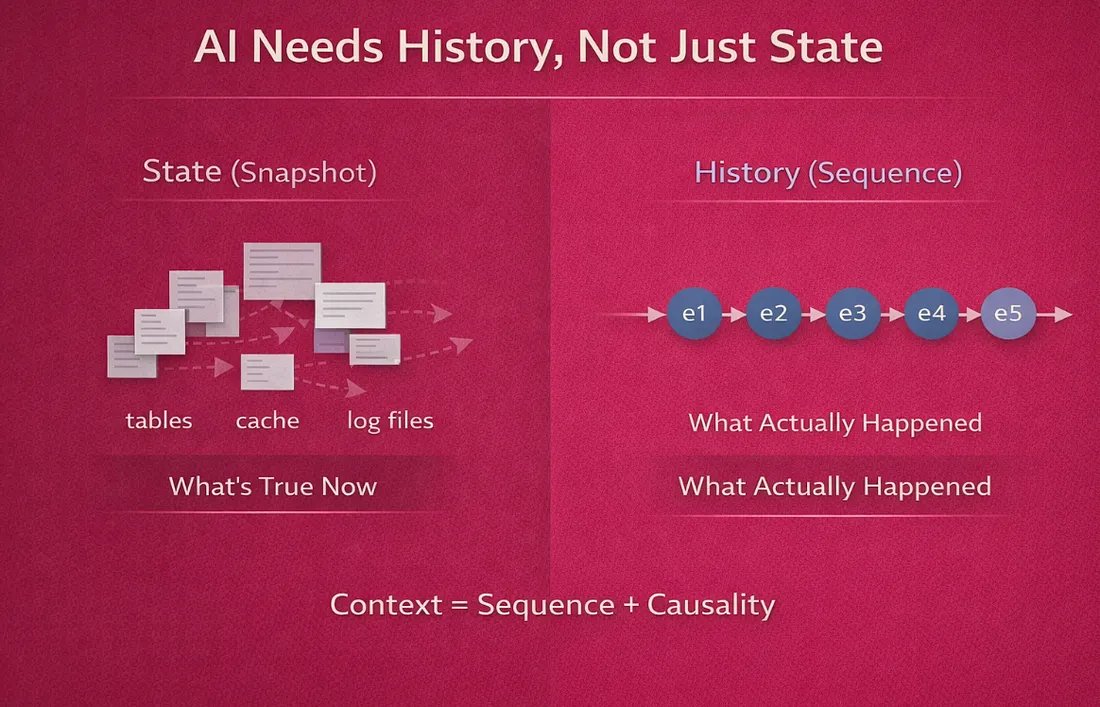

- Context is a word the market sold. Most teams don’t actually have it. Vendors delivered retrieval. Context without causality is just noise with better search.

- Causality is the piece that’s hardest to get right. Knowing what happened is not the same as knowing why, in what sequence, and what state the system was in when it did. AI needs the second thing.

- Without a single source of truth, both fall apart. Every AI implementation pulling from multiple systems is working from a version of history that nobody fully trusts.

- Events are the only data structure that preserves all three. Context, causality, and a single source of truth are natural properties of an event-native system. In everything else, they have to be engineered separately — which is exactly where most teams are spending their time right now.

AI comes up in every conversation now.

Payments companies. Regional banks. Lending platforms. Compliance teams. Everyone is somewhere on the journey. Some are experimenting. Some are in production. Some are trying to figure out why the thing they built six months ago isn’t performing the way they expected.

Adoption isn’t the problem. Financial institutions are moving. The problem is what most of them are building on top of.

When you dig into how these AI implementations are actually structured — where the data comes from, how context gets assembled, what the model is actually working with — you find the same gap everywhere. And it’s not a model problem.

It’s the same data architecture problem we talked about in Part 1. Just showing up one layer higher.

Everyone talks about context these days. I’m not certain they understand what it actually requires.

Context Is a Word the Market is Selling Us

Somewhere along the way, the AI industry decided that context was the answer to everything. RAG pipelines. Vector databases. Retrieval layers. Semantic search. The pitch is always some version of the same thing: give your model the right context and it will perform.

That’s not wrong. But it skips over a harder question: what actually makes context useful?

A fraud analyst reviewing a flagged transaction doesn’t just need information about that transaction. They need to understand the sequence of events that led to it. What changed in that account three weeks ago. What the merchant’s history looks like. What the system knew — and didn’t know — at the moment the decision was made.

That’s not retrieval. That’s not a vector search over document chunks. That’s causality.

Most AI implementations in financial services are retrieving data. They’re pulling from current state tables, log files, warehouse exports, and API responses. They’re assembling something that looks like context. But what they’re missing is the thread that connects those pieces — the sequence, the timing, the cause and effect that makes the pattern meaningful.

Retrieval is not context. Context without causality is just noise with better search.

Standard RAG chunks documents and searches for relevance. It finds pieces that match. What it cannot do is preserve the order in which things happened, the causal relationships between events, or the exact system state at any given moment. For document retrieval, that’s fine. For financial services AI, it’s a fundamental limitation.

A Different Approach: Event-RAG

When we built the AI demos for our fintech and payments platforms, we ran into this limitation quickly. The standard RAG pattern worked fine for static documents. It fell apart the moment we needed the AI to reason about what actually happened with a specific account over time.

So we took a different approach. Instead of chunking documents, we fed the AI a complete, chronologically ordered event stream directly from KurrentDB. Every event in an account’s history — onboarding, transactions, fraud checks, state changes, compliance flags — merged in exact temporal order and sent as context. We call this Event-RAG.

The difference is simple. Standard RAG finds the relevant pieces while Event-RAG preserves the sequence. Every event carries a correlation ID and causation ID that trace which action triggered which response. This means that the AI can see the actual story rather than static data or information.

Customer Journey and Narrative AI: History That Speaks for Itself

The Customer Journey demo starts with account opening — the complete onboarding sequence, KYC checks, initial funding, early transactions — and follows the account forward through deposits, withdrawals, and every state change in between.

This isn’t a dashboard that shows current balances and recent activity. It’s a reconstructed timeline of everything that happened, in order, from the first event to the last. Every decision the system makes is visible. Every service that touched the account left a trace.

Narrative AI sits on top of this. It takes the complete event stream and produces a plain-language account of what happened — not a summary of current state, but an actual story. Why was this account flagged? What the pattern looked like over time. What changed, when it changed, and what triggered the change.

Most systems can tell you what an account looks like right now. Very few can tell you the story of how it got there.

What makes this different from a standard reporting tool is that the AI analysis is written back to KurrentDB as an event. That means you can run a customer journey analysis today, run it again in three months after new transactions arrive, and compare the two side by side. The AI’s own conclusions become part of the event history — queryable, replayable, and permanent.

Over time this builds something that didn’t exist before: a longitudinal analytical record of how an AI’s understanding of a customer evolved. Not just what the AI said. What it knew when it said it, and how that changed.

Anomaly Detection: Learning From the Source of Truth

Most anomaly detection in financial services works from baselines. A pipeline runs on a schedule, aggregates transaction data into a feature table, and the model compares new transactions against that table. The baseline reflects history — which at the end of the day is nothing but a compressed, summarized version of the actual story — in movie parlance a trailer rather than the actual movie.

The anomaly detection demo takes a different approach. It samples 5 to 20 accounts directly from the live event streams and builds a cross-account baseline from the raw event history. No preprocessing. No feature engineering pipeline. No ETL. The model learns from the same source of truth the operational system uses.

The result is a baseline that reflects actual behavioral patterns — not a summary of them. When a new account’s event stream deviates from that baseline, the deviation is understood in context.

Agent Audit Trail: Knowing What Your Automation Did and Why

As financial institutions automate more decisions — fraud approvals, onboarding steps, compliance checks, risk escalations — a new problem appears. The automation works. But nobody can easily answer: what did each automated service actually decide, and on what basis? — in short visibility or observability.

The Agent Audit Trail demo addresses this directly. Every automated service decision is captured as an event, tagged with actor metadata that identifies which service made the decision, what it was responding to, and what it concluded. The audit trail is not a log file. It’s a structured event stream — queryable, replayable, and permanent.

If an onboarding workflow approved an account that later turned out to be fraudulent, you can reconstruct exactly which services were involved, what each one decided, in what order, and whether any of those decisions deviated from expected behavior. The complete chain of automated decisions is auditable from the event stream.

This demo consistently surfaces a reaction the other demos don’t: relief.

Risk teams know their automated systems are making decisions they can’t fully audit. Showing them a native audit trail — built from the same event stream as the operational system, not a separate logging layer — addresses a problem they’ve been managing around for years.

Multi-Agent Orchestration: When Services Talk Through Events

The conversation around agentic AI usually focuses on what individual agents can do. What gets less attention is how multiple agents — or multiple services — coordinate without creating the tight coupling that makes systems brittle.

In most architectures, services coordinate through direct API calls. Service A calls Service B, waits for a response, then decides what to do next. This works until it doesn’t. When one service is slow, the whole chain slows down. When one service changes its interface, every caller has to be updated.

The Multi-Agent Orchestration demo shows a different model. Services — or agents — coordinate by publishing and subscribing to events. Service A publishes an event. Service B picks it up, does its work, publishes its own event. The coordination happens through the event stream, not through direct calls.

What KurrentDB adds is that this fan-out is fully visible and permanently recorded. Every step in a multi-service or multi-agent workflow leaves an event. The complete orchestration — who did what, in response to what, in what order — is reconstructable from the event stream at any time.

When agents coordinate through events, the orchestration is auditable by default.

AI Governance: The Thing You Get for Free

Every one of these demos shares a very important aspect that we didn’t set out to build but ended up being one of the most important things we could show.

Because every AI analysis is written back to KurrentDB as an immutable event, the complete history of what the AI knew, when it knew it, and what it concluded is permanently recorded alongside the operational data it analyzed.

Run a fraud analysis on an account today. Run it again next week after new transactions arrive. The two analyses sit in the same event stream, timestamped, comparable side by side. You can see exactly how the AI’s assessment evolved as the account history grew. You can see what new information changed the conclusion. You can reconstruct what the AI would have said at any point in the past.

This is AI governance without a governance layer. The AI’s decision trail is part of the event history — subject to the same immutability, queryability, and replay as every other event in the system.

For regulated institutions, this matters in a specific way. Model risk management frameworks like SR 11-7, the EU AI Act, CFPB adverse action requirements — all ask the same fundamental question: can you show what the AI knew, what it decided, and why? Most institutions are building separate systems to answer that question. With KurrentDB, the answer is already in the event stream.

These demos are just a starting point. Once context, causality, and a single source of truth are in place, the possibilities expand quickly. New questions become answerable. New capabilities become buildable. Things that used to require weeks of forensic work become queries.

To borrow from Star Trek — you can boldly go where no financial institution has gone before.

The AI Problem Is Still the Architecture Problem

In Part 1 we made the case that payments reliability is a data architecture problem, not a database problem. (Payments Reliability Is Not a Database Problem. It’s a Data Architecture Problem Part 1)

Part 2 is the same argument, one layer up.

The demos we built didn’t set out to prove an architectural point. We built them to show what was possible when AI runs directly against a live event stream — the same stream the operational system uses, with no ETL, no preprocessing, no vector chunks that lose sequence.

What came back from those demos — in rooms with fraud teams, compliance officers, and engineering leaders — was recognition. Not a new idea. Of a problem they already had, finally described in a way that pointed somewhere useful.

The financial institutions that get AI right over the next few years will be the ones that figured out, earlier than everyone else, that the model is only as good as the history it’s working from.

And history has to be designed for.

Without that history — as Spock would say — you’re working with insufficient data.

Live long and prosper.

This Series

Part 1: Payments Reliability Is Not a Database Problem. It’s a Data Architecture Problem.

Part 2: AI in Financial Services Has a Data Problem. Nobody Is Talking About the Right One. (you are here)

Part 3: Why Traditional Architectures Fail at Scale — Coming next.

Kurrent builds KurrentDB, an event-native database designed for financial services and other systems where history, traceability, and replay are first-class requirements.